In(significant) thoughts

“Heads, you get to keep your head.

Tails… not so lucky.”

Harvey Dent in the movie of The Dark Knight (2008)

In the age of big data, everyone had heard sentences about something being statistically significant or insignificant. But significantly less people are able to explain what it truly means. Research shows that even people with formal statistical training misinterpret statistical significance. This post is my (in)significant attempt to clear things up. If you have only 10 seconds to read, jump to the bottom for the lessons. If you have 6 minutes, let me begin with a story.

Harvey Dent (later transforming into Two-Face), the district attorney of Gotham City in Christopher Nolan’s movie, The Dark Knight had a strange habit: he used to decide important questions using a coin. Take for example his casual conversation with the hitman of the mayor while pointing a gun to his head. He is flipping the coin: if it’s heads, he won’t pull the trigger, if it’s tails, the man has no luck.

We watch similar flips 3 times before learning that the coin is tricky: both of its sides are heads.

Watching the movie for the first time, this turn took me by surprise. The main reason for the surprise — beside the fact that I am a very naive movie-watcher who falls for all the tricks — is that observing 3 heads in a row is not that unusual with a standard coin either. It can simply come up by chance. Actually, we can calculate how much common it is: the probability of observing heads after 1 flip is 50%, observing 3 heads in a row is 50%*50%*50% so 1/2 to the third, that is 1/8 or 12.5%. Not that unusual.

What if we watched 4 heads in a row? 5 heads? 10 heads? Could it come up by mere chance? After 10 heads even the naive movie-watchers like me would have started to smell a rat.

Why? Is it impossible to observe 10 heads in a row with a fair coin? No.

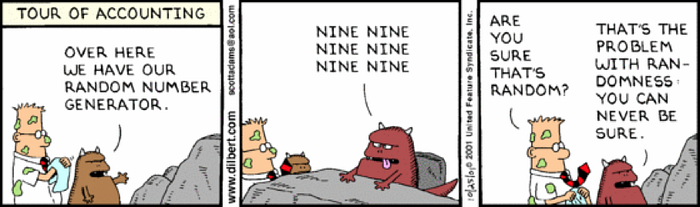

You can never be sure. There is some chance to observe even 10 heads in a row with a fair coin. There is some chance to observe even 100 heads in a row. There is some chance to observe 6 nines in a row with a random number generator. Chance is a tricky thing — you can never be sure.

You could be sure only if you observed the coin itself. But we do not see the coin, nor what is inside the random number generating cutie monster. We only observe the outcomes of the processes.

Nevertheless, observing 10 heads in a row with a fair coin is quite unusual. We can expect it in less than 1 out of 1,000 cases. It is so unlikely that we just do not believe it can happen. It is so unlikely that we could not take the result as generated by mere chance — there should be something profound there. Observing 10 heads in a row with a fair coin is so unlikely that we rather conclude that the coin is tricky. 10 heads in a row is quite inconsistent with a fair coin, but it is totally consistent with a tricky (only-heads) coin.

Some extreme observation is too unlikely to take as generated by chance, so we cannot reconcile it with our starting hypothesis (having a fair coin). We conclude that our starting hypothesis was wrong, the real state of the world should be different (the coin has to be tricky).

This is what we call statistical significance.

What is too unlikely? Typically, 5% (mainly, for historical reasons).

How to make coins in your business?

The Harvey-coin example describes an extreme world with only two states. Observing one tail immediately excludes the scenario of only-heads. Typically, our world is less extreme. We have to differentiate between scenarios that are not that different. We have to deal with biased coins: they seem like fair coins but they come up more often as heads than tails.

Why do we care that much about coins? Because many real world events — that could bring you real coins — are quite similar to them. At Emarsys, we are developing a marketing automation software, so we bother a lot with conversion rates. Is it more likely that a person opens an email if she gets it at a personalised time? Which subject line leads to higher click-through rate? Do individualised product recommendations on the website lead to higher purchase rate?

Statistically, conversion is just like a coin flip. A sent email is either opened (heads) or not (tails). We have two processes: sending at a personalised time, and sending with a general setting. Both coins are biased, as the opening probability will not be exactly 50%. But the real question is which coin is more biased? Is it better to send emails with personalised time or is it just like sending it traditionally?

Unfortunately, we cannot answer this upfront as we do not observe the processes (coins) themselves. But we can try them (flip) and measure their realisations (heads or tails). We can set up an experiment, where half of the recipients get their messages with send time optimisation, and the other half get them in a given time. Let’s say find that the first group opens 18% of the messages while the second opens 15% of them. What can we conclude? Does it show that send time optimisation is better (more biased) or is the difference just mere chance?

The sad news is, that our previous lesson also applies here: you can never be sure. The good news is that we can use the measurements to conclude about the states of the world. We can calculate the chance of observing an 18% open rate if the true opening probability were only 15% (that is, not better than with the standard way). If this chance is less than 5%, we say it is significant. We can conclude that send time optimisation performs better, it has a positive effect on opening probability.

Significant

So, the effect of send time optimisation is statistically significant. Does it mean that send time optimisation is better for sure?

No. There is (at most) 5% chance, that the opening probabilities are the same. This is what “you can never be sure” means.

Does it mean that the difference is considerable, “significant”?

No. Significant means only statistical significance. It does not mean economic significance. If we collected a huge bunch of observations (tons of coin flips), we could detect a very small difference as well. We could say that send time optimisation had a (statistically) significant effect even though this difference is only 0.01%, thus practically negligible.

But still, significance helps a lot to decide in random situations. It makes easier to differentiate between scenarios that came up just by pure chance and scenarios that originate from something profoundly interesting.

Insignificant

What could we say if our results were insignificant? Does it mean that send time optimisation is not better?

No. It only means, that the measure is consistent with the no-effect scenario as well. We cannot say that the measure is unlikely enough to reject that opening probabilities are the same. It has two possible reasons: (1) there is really no effect, and (2) there is just too much noise to measure the difference. So it does not mean that there is no effect. Only that we have not enough evidence to decide. (So let’s collect more data.)

As you can see, significance is a tricky thing (like the coin of Harvey Dent). But it is still one of our best solutions to deal with uncertainty.

As promised, I conclude by repeating the lessons:

Take-aways

- You can never be sure.

- Significance helps to decide.

- Significant does not necessarily mean considerable.